Recently, I bumped into someone I had not seen for nearly 20 years. She asked me how I was getting on with my research into making music using Artificial Intelligence. I answered her that AI, as we know it today, is old hat. And I went on to say that now I am exploring quantum computers in composition and performance. »Whaaat!« she reacted. »I thought AI would be the end of the road for computer music technology. Is that not so?« … »And what the heck is a quantum computer?«.

Indeed, I have been using AI to compose music since the 1990s. The impact of AI on music – and society in general – seems to be the buzz of the moment. But frankly, there is nothing extraordinary about making music with AI. Nevertheless, AI is, of course, still exciting for music. And I have a hunch that music technology is on the verge of a significant leap, probably powered by AI, but not necessarily so. I am thinking of new kinds of computing hardware, in particular, »quantum computers.«

To better understand why it makes sense to explore emerging quantum computing technology in music, let us first contemplate some historical facts and milestones. Then, we will delve into more technical details and examples.

Most people hardly ever realise that composers started experimenting with computers far before the emergence of most industrial, commercial, and social demands for computers in existence today. In fact, over the last 70 years or so, progress in computing technology and musical innovation has gone hand in hand. Computers have played a pivotal part in the development of today’s burgeoning music industry. Importantly, composers interested in exploring the potential of computing technology for their métier played an important role in these developments.

For instance, in the early 1950s, Australia’s Council for Scientific and Industrial Research (CSIR) installed a loudspeaker on their Mk1 computer to track the progress of a program using sound (Figure 1). Subsequently, Geoff Hill, a mathematician with a musical background, programmed this machine to playback folk tunes.

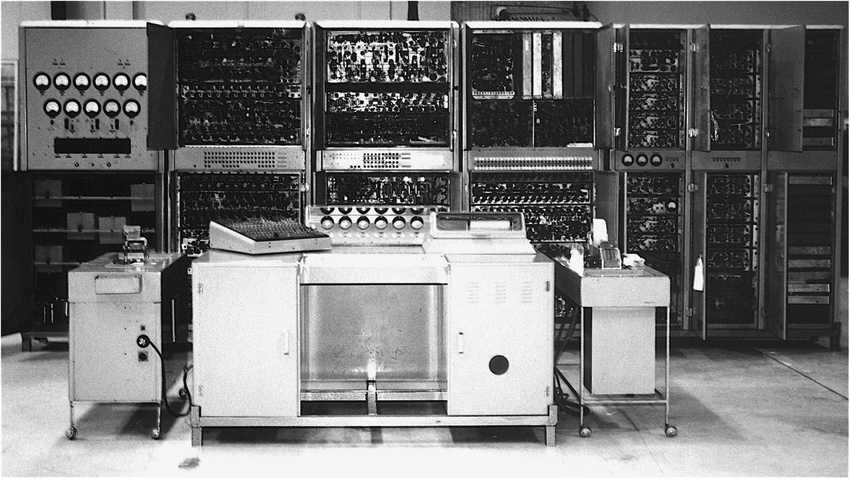

But the first significant milestone of computer music took place in 1957 at the University of Illinois at Urbana-Champaign, USA, with the composition »Illiac Suite« by Lejaren Hiller and Leonard Isaacson. Hiller, then a professor of chemistry, collaborated with mathematician Isaacson to program the ILLIAC machine to compose a string quartet.

The ILLIAC, short for Illinois Automatic Computer, was one of the first mainframe computers built in the USA, comprising thousands of vacuum tubes. They programmed this machine with rules of harmony and counterpoint. The output was transcribed manually into a musical score for a string quartet. Whereas Mk1 merely played back an encoded tune – like a pianola – ILLIAC was programmed with algorithms to compose music. Hence »Illiac Suite« is often cited as a pioneering piece of computer-generated music.

Various important inventions and developments have taken place since, notably the invention of the transistor and subsequently the development of the microchip. The microchip enabled the manufacturing of computers that became progressively more accessible to a wider sector of the population.

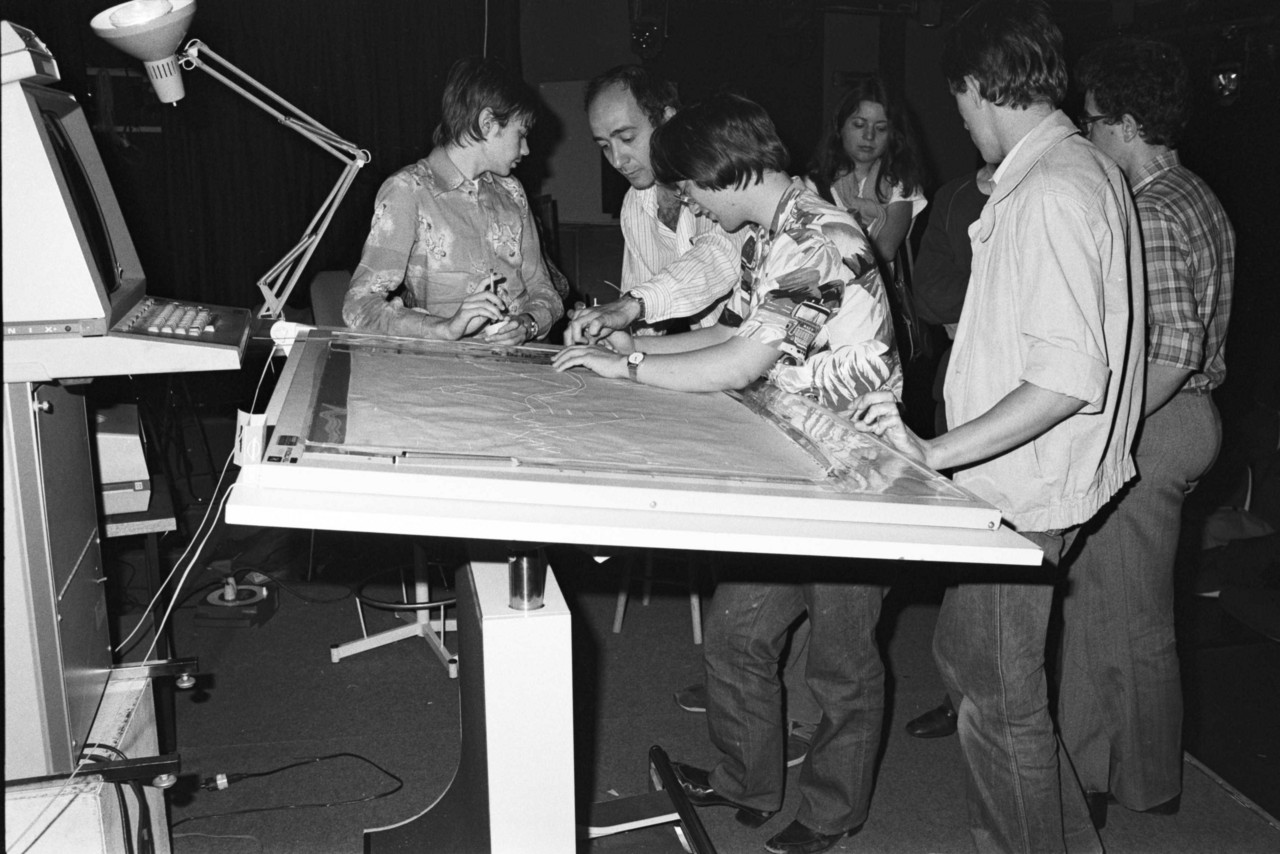

An increasing number of musicians have embraced computing technology since then. Some even started to build bespoke computer-based devices to create sounds and music. One remarkable example is the UPIC system, built in the 1970s by Iannis Xenakis, in collaboration with engineers at CEMAMu, or Centre d'Etudes de Mathématique et Automatique Musicales, in Paris (Figure 2).

Xenakis is known for having pioneered the use of computers to run statistical models to generate materials for musical compositions. However, his UPIC system allowed for something rather different at the time. One could draw sketches on an architect's drawing board and a computer would translate the drawings into sound. A notable composition of this time is »Mycenae-Alpha,« completed in 1978. Xenakis used the UPIC to generate sounds, which he further processed in his studio to compose the piece.

Another significant milestone took place in the early 1980s at IRCAM with »Répons.« This is an unprecedented composition by the celebrated French conductor Pierre Boulez. IRCAM, or Institut de Recherche et Coordination Acoustique/Musique, is a renowned centre for research into music and technology founded in 1977 in Paris by Boulez himself.

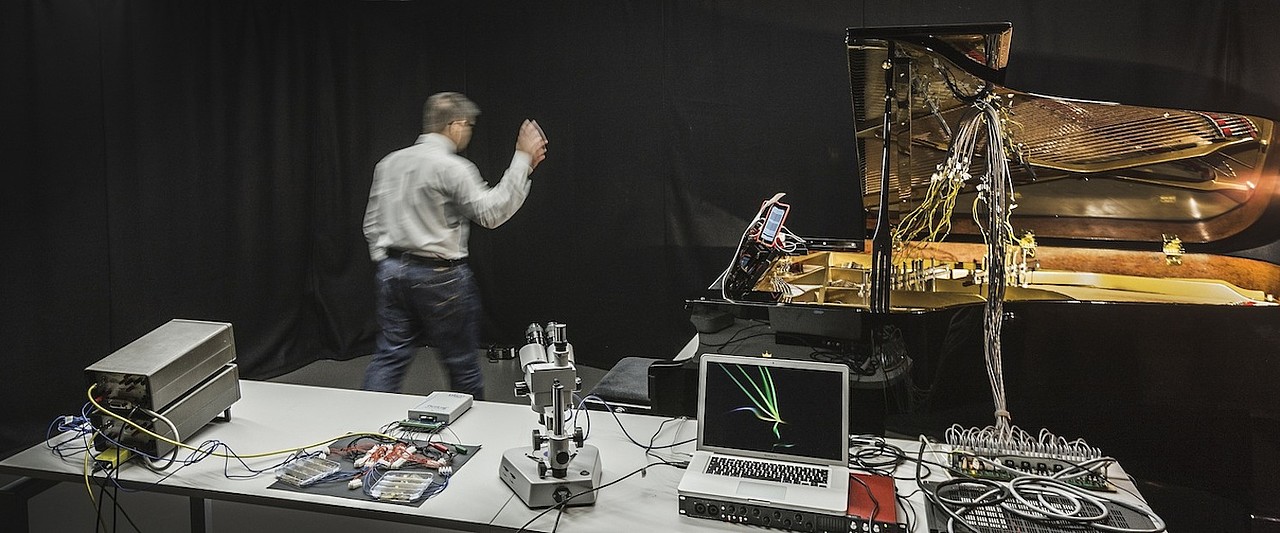

»Répons,« for chamber orchestra and six solo percussionists, was the first significant piece of classical music to use digital computing technology to perform live on stage. The machine »listened« to the soloists and responded with synthesised sounds on the spot during a performance. To achieve this, Boulez used a novel system, called 4X System, developed by Italian physicist Giuseppe Di Giugno and his team (Figure 3).

The UPIC and »Mycenae-Alpha,« and the 4X System and »Répons« epitomise the beginning of an era of increasingly widespread use of digital computers to develop new approaches to composition and perform interactively with musicians. They signify the beginning of our present time, where personal computers, laptops, notebooks, tablets, and even smartphones are used in musical composition and performance.

Also, let us not forget AI. As computers became increasingly available and user-friendly, composers started to leverage AI to make music. In the 1980s, the composer and now Emeritus Professor at the University of California Santa Cruz, David Cope, emerged as one of the pioneers of musical AI in the USA. Cope developed software that analysed a bunch of musical scores by a given classical music composer and generated new pieces imitating the style of the said composer. He is known for having programmed his system to generate 5,000 chorales in the style of J. S. Bach. This raised a lot of eyebrows in classical music arenas. Another important development was George Lewis’s Voyager system for real-time improvisation, which allowed non-hierarchical communication between computer and artist. The system was also conceived in the 1980s, based on mathematical models that today have become widespread in AI technology.

We should bear in mind, however, that AI is merely software. And software needs computing hardware to work. Today, AI runs on a type of computer hardware architecture that dates to the 1940s. The fundamentals of computing have not changed much since the Mk1 and ILLIAC machines. The difference is that today’s computers are much smaller and have a lot more switches than their predecessors. They work with microprocessors made up of billions of tiny switches that are activated by electric signals. Values 1 and 0 reflect the on and off states of the switches. Each of these switches embodies a digital bit, or bit. The bit is the smallest unit of information of a digital processor.

I have been on the lookout for new types of hardware since the turn of the century. Indeed, quantum computing has been on my radar for a while. To begin with, it was all theoretical, weird, and difficult to grasp. There were no actual quantum computers available two decades ago. It was only in 2018 that I found out that Rigetti, a start-up firm based in Berkeley, California, announced they were making their quantum computer available for researchers. In no time I packed my suitcase and went to Berkeley to see it all for myself. The rest is history.

I completed, »Zeno,« my first fully-fledged composition using quantum computers, in late 2018. »Zeno« is a rather exploratory piece for bass clarinet and electronic sounds. During the performance, a local laptop listened to the clarinet and extracted sequencing rules. These rules were converted into quantum algorithms and transmitted to the quantum computer in California through the internet. Then, the quantum machine ran the algorithms and sent the results back to my laptop, which were rendered into sounds.

Quantum computers are not generally available yet. They are still being developed. It is often said that the stage of development of quantum computers as of today is comparable to the stage of development of the large computer mainframes of the 1950s. But the industry is making terrific progress. Various software simulators are available. And a few companies already provide access to quantum hardware via the cloud (Figure 4). Thus, musicians can experiment with them even now.

»Zeno« is a milestone for me. What I have achieved with a rudimentary quantum computer sporting only a few qubits would require rather sophisticated AI programming running on a state-of-the-art desktop digital machine. This reinforced my hunch that music technology is on the verge of a significant leap. The thought of what these new machines might afford to musicians in 20 or even 10 years is mind-boggling.

But what is quantum computing? How can a quantum algorithm represent musical rules? How can the results of processing a quantum algorithm make music? First, let us see what makes a quantum computer different from a digital one.

A quantum computer deals with information encoded as quantum bits or qubits. The qubit is to a quantum computer what a bit is to a digital one: it is a basic unit of information.

Qubits are not switches. In hardware, qubits live in the subatomic world. They are subject to the laws of quantum mechanics. This makes qubits process information fundamentally differently from how digital bits do it.

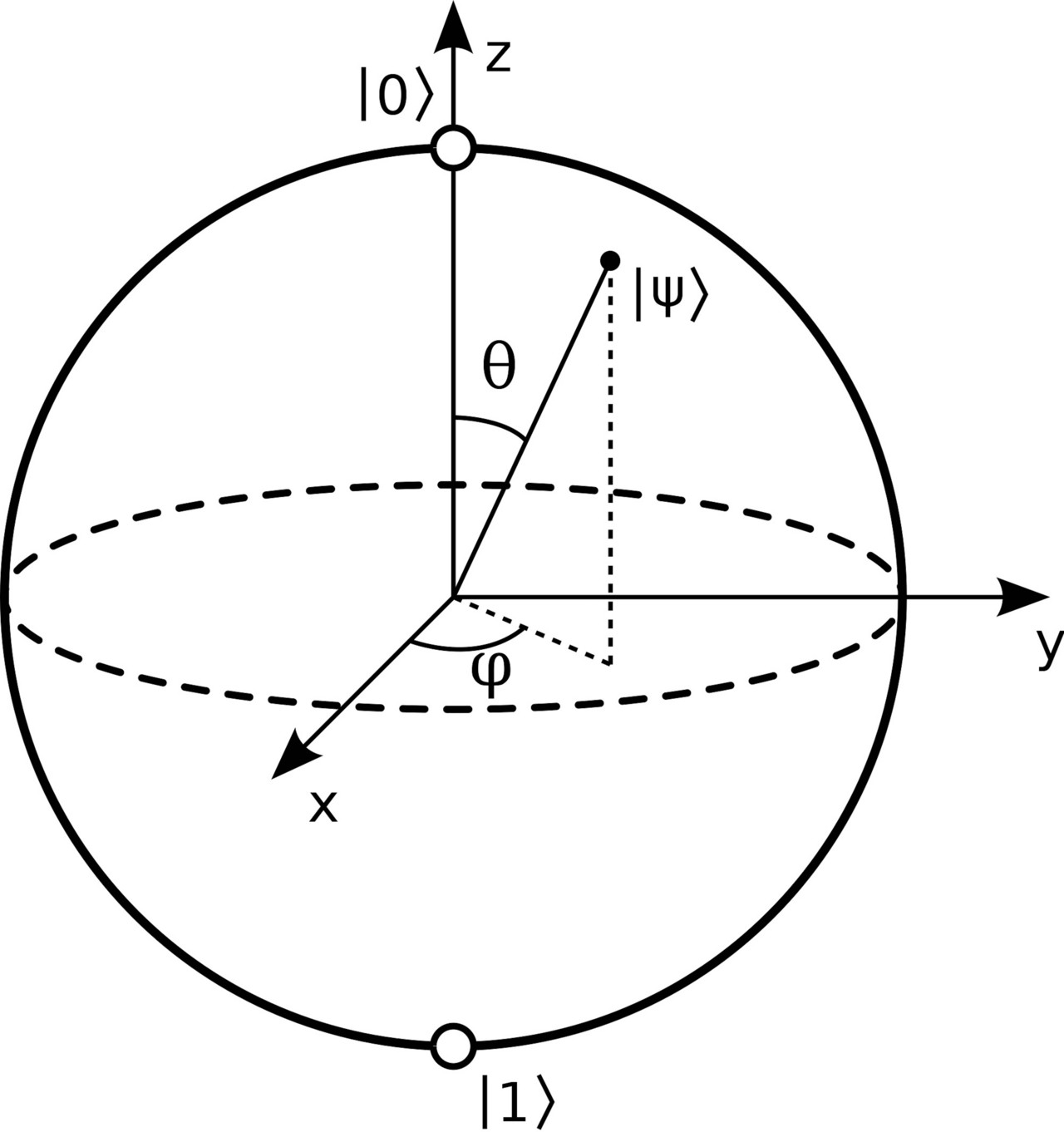

To picture a qubit, imagine a transparent sphere with opposite poles denoted by |0⟩ and |1⟩, which is the convention used to represent quantum states. From its centre, a vector whose length is equal to the radius of the sphere can point to anywhere on the surface. In quantum mechanics, this sphere is called »Bloch sphere« and the vector is referred to as a »state vector.« The state vector of a qubit represents a »quantum state« and can be described in terms of polar coordinates using two angles, θ and φ, as shown in Figure 5.

It is popularly said that a qubit can value 0 and 1 at the same time, but this is not entirely accurate. This misconception often arises because a state vector can point anywhere between the north and south poles of the Bloch sphere. When it does so, the qubit is in a state of »superposition« between |0⟩ and |1⟩. But the qubit will always end up valuing 0 or 1 when we read it.

In a state of superposition, the state vector is normally described as a linear combination of |0⟩ and |1⟩. For instance, state vector |Ψ⟩ is notated as |Ψ⟩= α|0⟩+ β|1⟩, where the coefficients α and β represent tendencies towards the north or the south, respectively. In simpler terms, the position of the vector on the Bloch sphere indicates whether the qubit is more likely to value 0 (when pointing to a region above the equator line) or 1 (when pointing below the equator line) when it is read out. In quantum computing terminology, α and β are referred to as amplitudes and the act of reading a qubit is referred to as measuring.

The weirdest thing in all this is that quantum computers process information with qubits in superposition. A good part of the art of programming a quantum computer involves manipulating qubits while they are in such an indeterminate state.

Performing operations on groups of qubits in superposition enables manipulations on many possible configurations of information simultaneously. Putting this into perspective, a digital processor deals with information represented by a series of bits that are switched on and off individually. For instance, with 3 bits one can represent numbers 0 to 7 as follows: 000, 001, 010, 011, 100, 101, 110, and 111, respectively. A digital processor deals with this data one at a time. With 3 qubits, however, a quantum processor represents those numbers simultaneously, which allows for processing them in parallel.

With 20 qubits, we would be able to simultaneously handle over one million pieces of information represented as quantum states in superposition. Currently, some quantum computers already have hundreds of qubits. This is not a big deal yet. But it is expected that they will have thousands or millions of qubits soon. There are several issues to be solved before these machines can leverage large amounts of qubits for computation. But this is not a question of if, but when.

The wonders of quantum computation do not end here. In addition to superposition, quantum programming involves »entangling« groups of qubits. Entangled qubits interact – or »interfere« – with one another and are no longer considered individually. They create a quantum system of their own. To unpack this, let us contemplate how the abstract notion of qubits relates to the physical world.

Consider the case of an electron. In quantum mechanics, the physical state of an electron is unknown until one observes it. Before it is observed, the electron is said to behave like a wave. When it is observed it becomes a particle. This phenomenon is referred to as the »wave-particle duality.« Qubits are special because they embody this duality.

Quantum systems can be described in terms of wave functions. A wave function depicts a quantum system as the sum of the possible states that it may fall into when it is measured. In other words, it defines what the particle might be like when the wave is observed.

In quantum computing, the higher the number of qubits used to construct a wave function, the higher the number of possible outcomes a system may yield. Each possible component of a wave function, which is also a wave, is scaled by a coefficient reflecting its relative weight. That is, some states are more likely than others. Metaphorically, think of a quantum system as the spectrum of a musical sound, where the different amplitudes of its various wave-components give its unique timbre.

As with sound waves, quantum wave-components interfere with one another, constructively and destructively. In quantum physics, the interfering waves in a wave function are said to be »coherent.« The act of observing the wave function decoheres the waves. Again metaphorically, it is as if when listening to a musical sound one would perceive only a single spectral component; probably the one with the highest energy, but not always so.

Superposition, entanglement, and interference are fundamental properties of quantum computing, which renders it fundamentally different from digital computing. An important characteristic of quantum computers is that the results of reading out quantum states cannot be predicted with absolute certainty. There are methods to get around this. However, it is not uncommon to leverage quantum unpredictability for specific applications, including music. Indeed, composers have often embraced stochasticity; e.g., Xenakis, mentioned earlier, is a case in point. I bet he would have loved quantum computers.

Now, let us examine two examples of how I have been using quantum computers to make music.

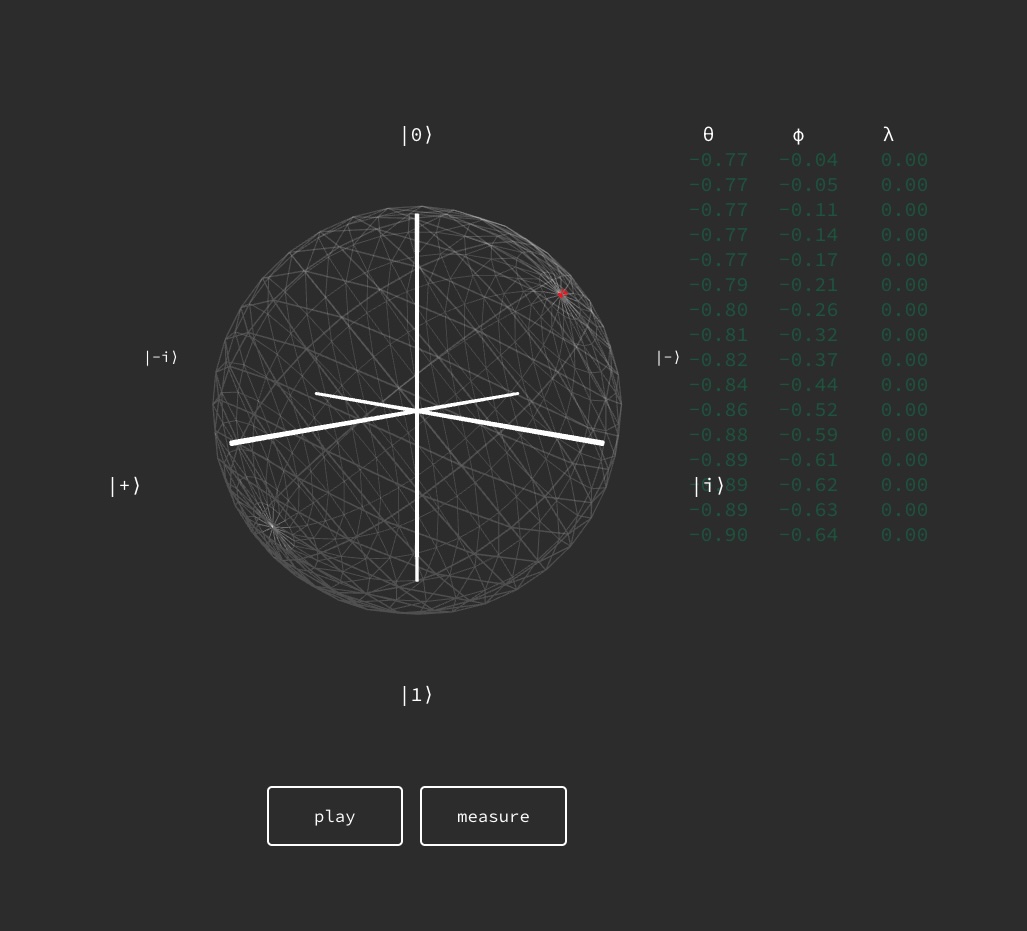

The first example involves Q1Synth, a system that synthesises sounds from a qubit’s state vectors and measurements. Q1Synth is presented on a computer screen as a Bloch sphere portraying a qubit (Figure 6). The performer plays the instrument by rotating the qubit using a mouse or a MIDI gesture controller. The system sonifies the state vectors produced by the rotations. While the qubit is rotated a continuously changing sound is produced. The current version of Q1Synth uses three types of sound synthesis techniques for doing this namely, frequency modulation (FM), additive synthesis, and granular synthesis.

The instrument has a play button and a measure button. The play button starts the sound, and the user can vary the sound by rotating the qubit. When the measure button is pressed, the instrument takes control from the user. It communicates with a quantum computer to measure the qubit and moves the vector to either north or south, according to the result of the measurement. This stage is also rendered into sound. Pressing the play button again recommences the process, and so on. This is how the instrument is played.

To make the measurements, Q1Synth connects with a quantum computer remotely, through the internet. It currently uses a machine developed by IQM in Finland. There is also an option to simulate measurements locally.

The composition »Spinnings« (2022) uses three Q1Synth instruments (Figure 7), each of which is played by a different performer. In this case, the performers create quantum states and measurements with three qubits.

More information about Q1Synth can be found in the paper, Q1Synth: A Quantum Computer Musical Instrument. A lite version is freely available online, which can be accessed through this link.

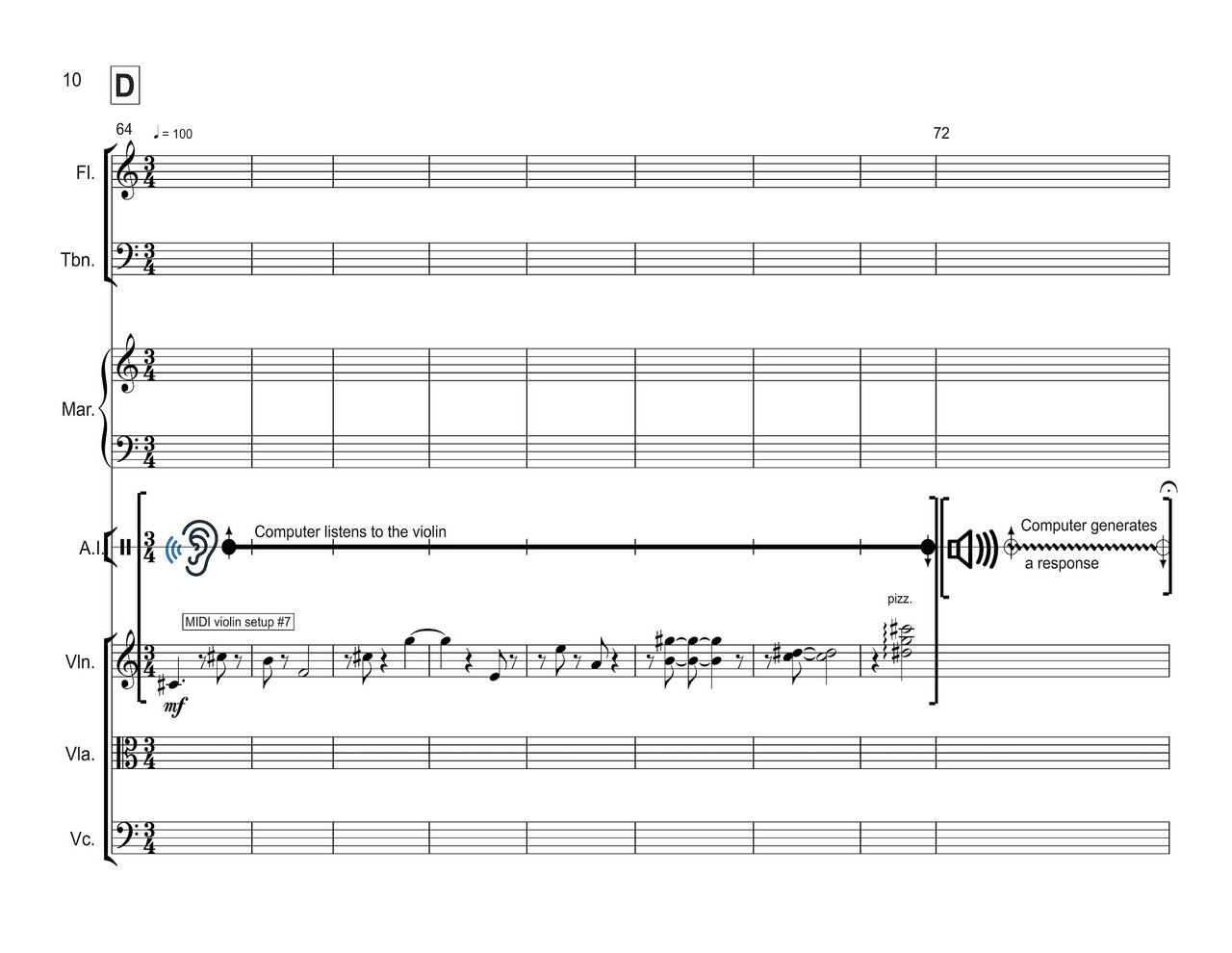

The second example involves QuPoly, a system developed for »Qubism.« This is a sonata-like piece in three movements for chamber orchestra and electronics, composed for the London Sinfonietta.

QuPoly extracts sequencing rules from input music and represents them as quantum states. Then, it generates responses based on those rules, by measuring the respective quantum states. In a nutshell, consider a rule stating that there is a 30% chance that note D3 would be followed by note C3 and a 70% chance that it would be followed by note E3. In this case, the system converts those probabilities into amplitudes for a wave function whose measurement may yield a note C3 or E3, respectively. Let us study this in more detail.

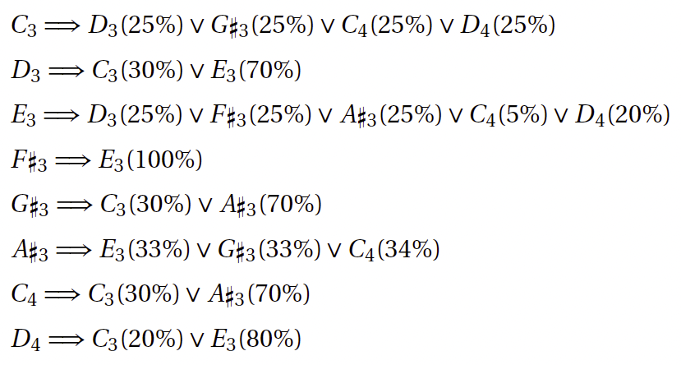

Assume that QuPoly extracted the following rules from a short tune:

The symbol »∨« stands for »or« and the percentage figure in parenthesis next to the notes is their probability of occurrence. Thus, if we give QuPoly one of the above initial notes, the system will evaluate the respective rule and produce a new note. This new note is subsequently used to pick a rule to generate another note, and so on. Each time the system needs to evaluate a rule, it automatically builds a program to manipulate qubits to produce a wave function representing the probability distribution of the rule in question. The measurement is likely to result in one of the options allowed by the rule.

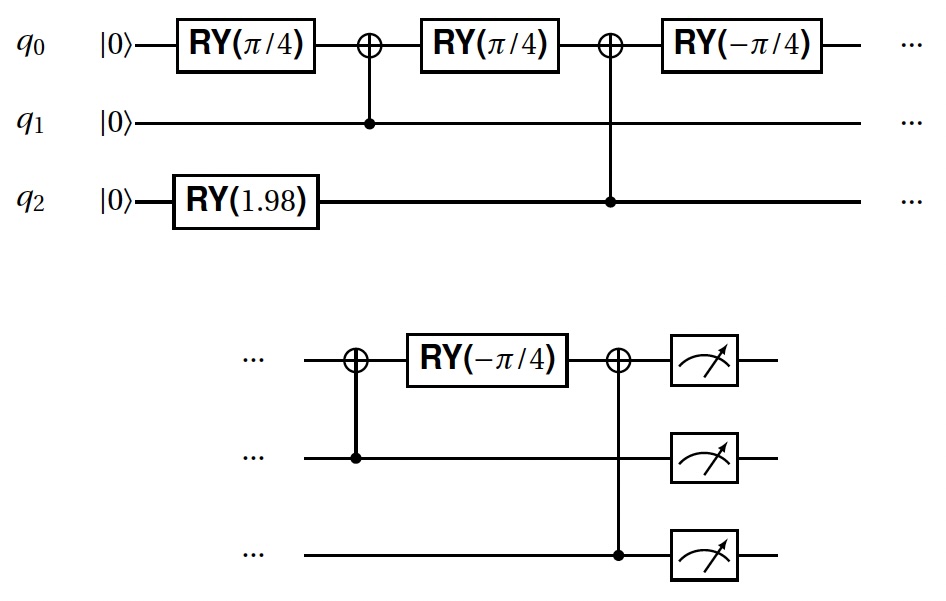

The example above requires three binary digits to represent the notes: C3=000, D3=001, E3=010, F#3=011, G#3=100, A#3=101, C4=110, and D4=111. Therefore, only three qubits are required to represent and process the rules.

Let us examine the rule for note G#3. Considering the binary representation proposed above, the system expresses this rule as 100 ⇒ 000(30%) ∨ 101(70%). In this case, the system arms three qubits in such a way that they would most likely produce either 000 or 101 when they are read out. Figure 8 shows a circuit representation of the instructions to arm the qubits for this rule. The circuit is read from the left to the right. The various commands RY tell the system how to rotate the qubits q0, q1, and q2 along the meridian axis.

For »Qubism,« there are moments on the score indicating when QuPoly listens to an instrument and generates responses. Figure 9 shows a passage illustrating this interaction. The video shows an excerpt of the premiere by the London Sinfonietta at King’s Place, London, in June 2023. Watch for the section towards the end, where the responses are played with percussive sounds.

For more details about QuPoly, the paper »Teaching Qubits to Sing: Mission Impossible?« introduces an earlier incarnation referred to as QuSing. The underlying mechanisms are identical.

The two above examples are only a glimpse of how quantum computing can be harnessed for music. There is a growing community of enthusiasts embracing this technology. They are developing innovative approaches to music making. Several of these were presented at the 2nd International Symposium on Quantum Computing for Musical Creativity, which took place in Berlin in October 2023.

If you are interested in a more in-depth introduction to the emerging field of Quantum Computer Music, I recommend the book, Quantum Computer Music: Foundations, Methods and Advanced Concepts, published by Springer in 2022. For the more academically inclined, the University of Plymouth in the UK now offers a PhD in Quantum Computing in the Arts, Music and Humanities. Also, watch out for Moth, a newly-formed company devoted to developing technology for and promoting quantum computing for music and the arts.

In conclusion, I would say »no, AI is not the end of the road for computer music technology.« Quantum computers are right around the corner. We do not know for sure how they will impact music and creativity, but the time is ripe to zoom in our periscopes and take a peek.