In technical terms, »tuning« is the process of getting into a certain state of resonance. In electronics the term is related to so-called »tuned circuits.« Tuning thus involves circuitry. In order to get good reception, the circuitry of a radio receiver needs to oscillate with the same frequency as the radio sender’s carrier frequency; the receiving circuit is tuned to the sending circuit. A tuned circuit is usually only receptive to a specific kind of signal, mostly a periodic, regularly changing oscillation at a specific rate, determined by the circuit’s elements (capacitor and inductor). Tuning the A string of a violin means to evaluate, by ear, whether it vibrates as fast as 443 times per second or not. If the pitch of the violin does not match the pitch of the tuning fork exactly, but is very close, you can sometimes hear beating frequencies.

The telephone is a transducer

While tuned circuits are only sensitive to their resonant frequencies, the telephone, invented by Alexander Graham Bell in 1876, is receptive to a broad range of periodic and non-periodic signals. It is a transducer, a device that leads across input energy into output energy, for example acoustic waves into electric current. While both are differing in kind, they are directly related to each other.5 It is this direct, analogic linkage of the signal flow, be it periodic or non-periodic, that allows a transducer to be considered a tuned circuit – one that is not only sensitive to a specific frequency, but is tuned for a broad range of frequencies, rhythms, fluctuations, and all sorts of signals.

Sonic experimentation

The process of revealing machinic as well as bodily, physiological, affective, and somatic processes, signals, oscillations, and rhythms to human perception via sound – the process of sonifying the sonic – has a long history. Telegraphy was first conceived by its inventors, Samuel Morse and Alfred Vail, as a visual coding system using dots and dashes, but was transformed into an auditory practice as of the 1860s. Listening to the rhythm of Morse code made by a telegraph sounder was much more efficient than staring at marks printed on a roll of paper. Listening skills were soon developed and embodied by telegraph operators. They were the first to tune in to machinic sound.

Tuning to nerve activity and solar winds

As early as 1878, physiologists were already listening to weak voltage and current changes caused by nerve activity inside muscles and other parts of the body. Not only were small bioelectrical currents made audible, but listening to »natural radio« became prevalent with the advent of the telephone and its wired infrastructure. The sonic speculations by Thomas A. Watson, the so-called assistant of Bell, are probably the first testimonies of an aesthetic of signal transmission. Watson may be the first person that listened to electromagnetic noise. Douglas Kahn, media and art historian of natural radio, writes: »Watson heard natural radio when the long iron telephone test line acted unwittingly as a long-wave antenna. This was before anyone knew what an antenna was or, for that matter, what electromagnetic radio waves were...The only reason that Watson was the first person to accidentally hear these sounds was due to his privileged proximity to the right type of transducer: the telephone.«6

...and more wetware

While the development of the triode or electronic vacuum tube in the late 1900s provided the basis that transformed the telephone and radio into mass media, new listening cultures in science, especially in neurology and electrophysiology, were being cultivated. In a scientific article from 1920, physiologists at Harvard Medical School described methods using vacuum tube amplifiers combined with »telephone receivers« in order to listen to neural activities. About ten years later, Edgar D. Adrian, recipient of the 1932 Nobel Prize for Physiology and pioneer of neurology, describes the process of making audible the amplified potential changes of nerves with telephones or loudspeaker as a way »to learn something more.«7 This kind of auditory exploration is sometimes still practised as an immediate feedback method while probing brain tissues with invasive electrodes. In the late 1950s, during experiments with the visual perception of a cat’s brain, David Hubel and Torsten Wiesel, recipients of the 1981 Nobel Prize for Physiology or Medicine, were looking at the visual stimuli that the cat was watching while also listening to the activity of specific neurons in the cat’s visual cortex. They found that some neurons rapidly discharge bioelectrical pulses when the cat’s vision was stimulated by a moving screen projection of thick lines oriented at one angle, while other neurons responded best to other angles: »[m]ost amazing was the contrast between the machine-gun discharge when the orientation of the stimulus was just right, and the utter lack of a response if we changed the orientation or simply shined a bright flashlight into the cat’s eyes.«8 Hubel and Wiesel included the non-visual medium of sound in their experiments, but their famous 1959 paper, »Receptive Fields of Single Neurons in the Cat’s Striate Cortex,« concealed their practice of listening, showing only the printable curve diagrams of the recorded neuronal activities.

Circuit models of wetware

Some years earlier, in the late 1940s, another duo of neurologists, Alan L. Hodgkin and Andrew F. Huxley, were trying to make an equivalent-circuit diagram of the action potential signal discharged by neurons of giant squid. This mathematical model circuit, later called the Hodgkin-Huxley model, with symbolic resistors, capacitor, and voltage sources, enabled them to simulate the time-varying signal that comes close to the signal that other scientists were already listening to ten years ago. In the 1930s and 1940s, the modeling of signal processes with so-called equivalent circuits already had an established tradition, most prominently in acoustics but also in other fields of science and engineering. Electric oscillations, as well as bioelectric signals and acoustic vibrations, are equal in mathematical terms, and can all be described by using equivalent-circuit diagrams. Moving from acoustics to electronics was thus merely an act of algebraic translation. Acoustic variables such as force, speed, displacement, mass, and elasticity were replaced by electric variables such as voltage, current, charge, self-induction, and capacity.13 Signal processing in electrophysiology and acoustics became a matter of electronic communication, and thus of circuitry. Furthermore, listening, tuning, and un-tuning to electroacoustic signals became an important skill not only for acousticians but also electrophysiologists and engineers of media technologies.

Affects of machines / machine bodies

Speech transmission in telephony was based on continuous signals, whereas the operation in order to connect two telephone apparatuses was shaped by discrete impulses, switching, telegraphic signals, pure tones, and other electroacoustic signals. These were audible – sometimes by mistake and at other times on purpose. The previously manual switching done by female telephone or switchboard operators, who directly spoke with their customers, was gradually replaced by machines as of the 1940s. According to Bell System, the 1920s New York Metropolitan area already consisted of 1.4 million telephones and about 158 central offices, with operators serving up to ten thousand lines from one office. Forty years later, more than 15 million telephones had access to nationwide dialing.

Digital signal processing in the 1920s, simply called »signaling and switching,« came with the need to manage, control, automate, optimise, and economise the many switching operations necessary for establishing a connection between two telephone users. The switching was done by relays, which not only made a deafening machinic noise of layered rhythmic rattling sounds, but were also controlled by rhythmic signals; pulse trains emanated from the dial circuit of the telephone. Other more tone-like signals were used in long-distance calling from the 1950s on. Signaling and switching in telephony was audible because signaling and speech were transmitted over the same wire. This was called »in-band signaling.« The transducer built into the telephone receiver acted like a microphone. It transformed not only the human voice but also audible electroacoustic impulses, strange beeps, and other machinic sounds into voltage fluctuations. In the early 1960s this latent vulnerability was discovered by playful explorations of interested amateurs, tinkerers, and students in North America. Phil Lapsley, author of Exploding the Phone and historian of this often forgotten subculture of »phone phreaks,« narrates the story of Ralph Barclay, a young Washington State University student in Pullmann. In spring 1961 he made an electronic device – later called the blue box – that enabled him to communicate, control, and tune into the telephone network’s automatic switching machinery. All of the required information was available via an article published in November, 1960, in an issue of the Bell System Technical Journal, which he found in the university library. In the 1960s one could pick up the telephone and make a free call to an operator (called the directory assistance) anywhere in the US. By sending a 2600 Hz tone before the operator could answer the call, one could trick the machinic listening circuitry built into the automatic switching system. By sending specific combinations of two frequencies for each digit of the desired telephone number you could dial any number in the US for free.

Tuning into computers

Messing around with the telephone network soon became an intellectual playground for young engineering students. Towards the end of the 1970s, this microculture turned into a well-known subculture. More historical evidence for epistemological hacking in the early 1960s is found in an article in the Massachusetts Institute of Technology (MIT)’s student newspaper, The Tech, titled »Telephone Hackers Active,« and dated 20 November, 1963. The article describes how MIT students were caught playing with the phone system, some using the university’s PDP-1 computer to search the lines. This machine was not the first computer at MIT to make sounds. The TX-0 is a precursor, for example. In an email interview I conducted with Peter Samson, who was a student at that time, he wrote: »[t]he TX-0 had a built-in loudspeaker, mostly to aid in debugging programs. The loudspeaker was attached to one bit of the machine’s accumulator register. The tones and patterns of sound it would make became familiar to the machine's users, and could help determine whether a specific program was operating properly or not.« Samson wrote a compiler for the PDP-1 as well, which simplified coding music with it. Notably, it was possible to play four tones at once, which made it theoretically possible to synthesise two simultaneous tones that the »phone phreaks« would use for controlling the telephone network.

Computer music before its invention

Amplifier-loudspeaker setups connected to the circuitry of early computers, such as the one built into the TX-0, and probably the PDP-1 as well, were not unusual. Other famous computers with such circuitry were the UNIVAC-I, the CSIRAC in Australia, and the Pilot ACE in England, as well as later machines such as the Pegasus produced by Ferranti Ltd., also in England. A working program had its characteristic sound depending on where the amplifier’s input was connected, and the sound changed only when a fault was detected. Individual flip-flops in different registers, different data bus nodes, or other passages of data traffic could become sources for bleeps, pulses, noises, and other electroacoustic signals. Not only was passive listening to processes of computation very common, but so was an active exploration of the machine while listening to its rhythms. Machine instructions and algorithms somehow became algorhythms.14

Replacement of listening skills

While some students at MIT were playing with the PDP-1, Fernando José Corbató, a postdoctoral researcher at the same institution, was leading a team developing the compatible time-sharing system (CTSS). CTSS was a computer system that could monitor itself. It was an early version of what later became known as an operating system. With the CTSS system, the wetware of the chief engineer and operator responsible for the maintenance of the computing machines was replaced by software. Routine error detection and process monitoring, previously performed by humans and human ears, was partly implemented into the functionality of computers. The computers could soon listen to themselves. Amplifier-loudspeaker setups disappeared. At the end of the 1960s nobody was listening to the rhythm, noise, and melodies of data signals anymore, but rather reading signs and alphanumeric symbols on their screens. To put it provocatively: reading, inspecting, and looking won over listening.

Un-tuning

Operators disappeared long before the development of operating systems in mainframe computing. Telegraph operators were subsequently substituted by tele-printers in the 1920s. Since the 1950s, telephone operators were gradually replaced by automatic switching machinery. Even the computer itself was a replacement of mostly female mathematicians called computers. Other fields touching on listening practices, media technology, operators, their disappearance, and their implementation into technology are, for example, the history of acoustic location in aircraft detection, sound-ranging for artillery detection, sonar, and radar.

After a phase where the auralisation of specific signals from the above-described Morse code, neural activity, natural radio, single neurons, switching machinery in telephony, or electronic computers, as well as the necessary cognitive skills of the persons listening to these sounds, have been constituted as a cultural technique,15 listening skills were often formalised, abstracted, automated, and finally implemented and assimilated into machinic processing. The sounds became silenced, un-tuned, and disappeared to become part of an inaudible operativity.

More than just looking

Understanding complex communication networks, machinic processes, biological signaling, and their ecologies by listening, tuning, and un-tuning to their signals with simple transducers such as the loudspeaker has a long history. But even by listening, especially in the case of acoustic media technology, their inner workings and signal processing are not audible per se. This inaudibility is often associated with invisibility and thus blindness. The so-called blind spot of media conceptualises the inability to perceive the operativity of media. Correspondingly, the signal processing of imaging technology is not graspable as an image. Understanding seeing by looking closer is not possible. Besides waiting for the rare moments when media processing, or operativity, becomes perceivable, it is mostly through unexpected disturbances, glitches, and failures, as well as oscillating between looking and listening as described above that offers a fruitful approach for perception. Radar is not seeing with radio, but closer to radio listening. Similarly in sonar, ultrasonic pulses are transmitted and the time elapsed is detected. This is not seeing underwater, but rather listening.

Algorhythmics

In order to understand how our current high-tech machinery is operating and what cultural, aesthetic, epistemological, and critical aspects are involved with their dissemination, a concept I previously called algorhythmics might take a technologically accurate and also creative position. While the term »rhythm« refers not only to aesthetics, sound, and living organisms, but also to sonicity, signal processing, modulation, fluctuations, and vibrations, the term »algorithm« refers more to computer science, mathematics, statistics, formal languages, or logics. By synthesising algorithm with rhythm the neologism »algorhythm« is born, oscillating between code and signal, between the symbolic and the physical side of computational media.

Algorhythmics is thus a specific mental mode of tuning, a method of research-creation and artistic research as practised by myself and others whose work is informed by the specific post-digital interweaving of energy and information, of circuitry with computation, and of symbolic manipulation with energetic processing. »The algorhythmic« is an extension of »the sonic« as an overall category of signal flow, transgressing the limits of the musical and the acoustic with aspects of symbolic manipulation, information, computation, and technomathematics.16

- 1

Devices that sense all forms of stimuli, such as heat, radiation, sound, vibration, pressure, acceleration, and so on, and that can produce output signals that are electrical, pneumatic or hydraulic may be called transducers. Thus many measuring and sensing devices, as well as loudspeakers, thermocouples, microphones, and phonograph or guitar pickups are all transducers.

- 2

Kahn, D. (2013), Earth Sound Earth Signal: Energies and Earth Magnitude in the Arts, Berkeley: University of California Press, 14.

- 3

Adrian, E. D. (1932), The Mechanism of Nervous Action. Electrical Studies on the Neurone, Philadelphia: University of Pennsylvania Press, 6.

- 4

Hubel, D. H. (1988), Eye, Brain, and Vision, New York: W. H. Freeman (Scientific American Library), 69.

- 5

Devices that sense all forms of stimuli, such as heat, radiation, sound, vibration, pressure, acceleration, and so on, and that can produce output signals that are electrical, pneumatic or hydraulic may be called transducers. Thus many measuring and sensing devices, as well as loudspeakers, thermocouples, microphones, and phonograph or guitar pickups are all transducers.

- 6

Kahn, D. (2013), Earth Sound Earth Signal: Energies and Earth Magnitude in the Arts, Berkeley: University of California Press, 14.

- 7

Adrian, E. D. (1932), The Mechanism of Nervous Action. Electrical Studies on the Neurone, Philadelphia: University of Pennsylvania Press, 6.

- 8

Hubel, D. H. (1988), Eye, Brain, and Vision, New York: W. H. Freeman (Scientific American Library), 69.

- 9

Wittje, R. (2013), »The Electrical Imagination: Sound Analogies, Equivalent Circuits, and the Rise of Electroacoustics«, 1863—1939, Osiris, 28/1, 43f.

- 10

For more details, see Miyazaki, S. (2012), »Algorhythmics: Understanding Micro-Temporality in Computational Cultures, Computational Cultures«, A Journal of Software Studies, No. 2, online issue. And by the same author (2013), »Urban Sounds Unheard-of: A Media Archaeology of Ubiquitous Infospheres«, Continuum, 27/4, 514—522; and Algorhythmics.

- 11

Parikka, J. (2013), »Afterword: Cultural Techniques and Media Studies«, Theory, Culture & Society, 30/6, 147–159.

- 12

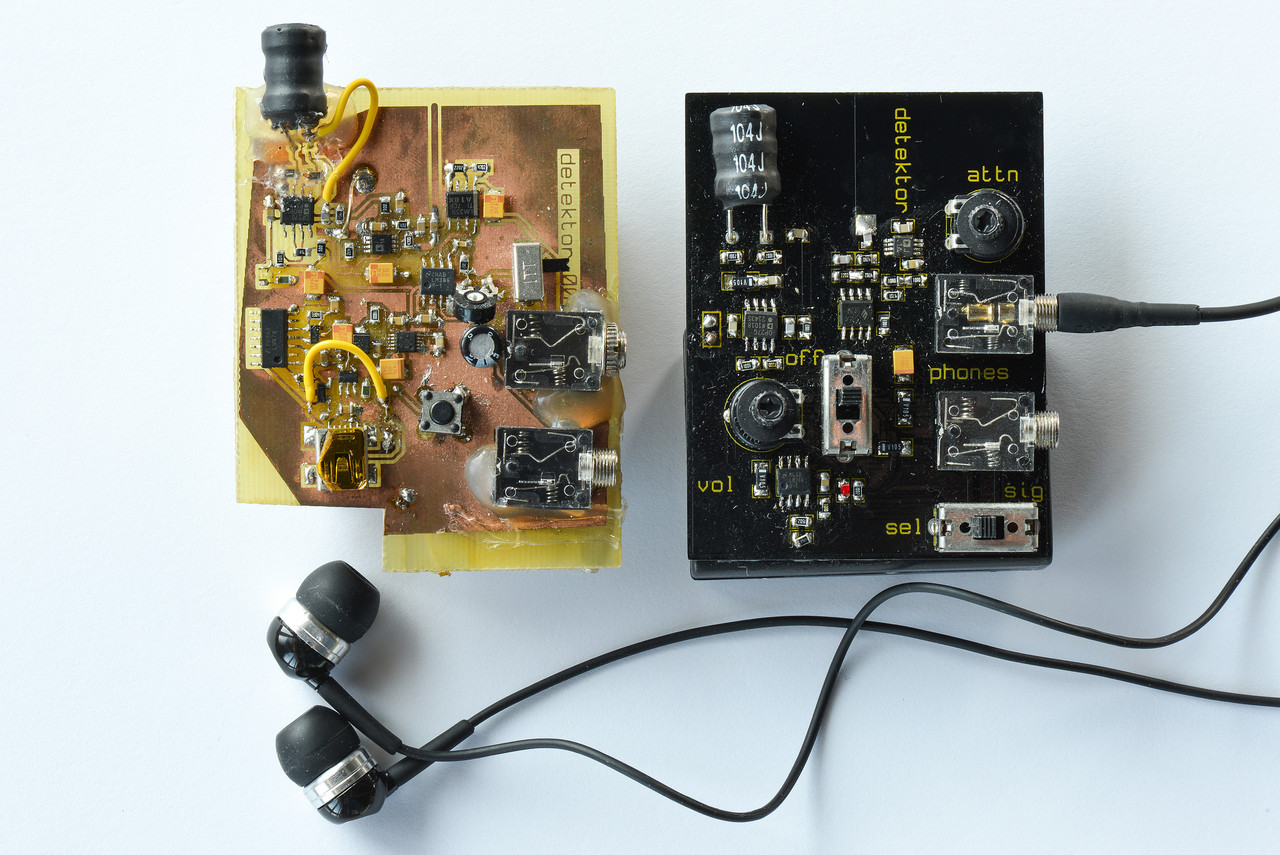

See Algorhythmics & Detektors for examples of such practices.

- 13

Wittje, R. (2013), »The Electrical Imagination: Sound Analogies, Equivalent Circuits, and the Rise of Electroacoustics«, 1863—1939, Osiris, 28/1, 43f.

- 14

For more details, see Miyazaki, S. (2012), »Algorhythmics: Understanding Micro-Temporality in Computational Cultures, Computational Cultures«, A Journal of Software Studies, No. 2, online issue. And by the same author (2013), »Urban Sounds Unheard-of: A Media Archaeology of Ubiquitous Infospheres«, Continuum, 27/4, 514—522; and Algorhythmics.

- 15

Parikka, J. (2013), »Afterword: Cultural Techniques and Media Studies«, Theory, Culture & Society, 30/6, 147–159.

- 16

See Algorhythmics & Detektors for examples of such practices.

CTM 2015: Manifestations of Psyche. Lecture by Shintaro Miyazaki by CTM Festival

CTM 2015: Manifestations of Psyche. Lecture by Shintaro Miyazaki by CTM Festival