To understand the roots of immersive sound, and how that might chart its future, it’s worth a trip back to the early days of motion pictures. Let’s sit ourselves in one of the lush, padded seats of New York’s Broadway Theatre in midtown in November 1940, where Walt Disney Productions is about to premiere its feature-length orchestral cinematic reverie, Fantasia.

Spatial audio’s Fantasia moment

Fantasia doesn’t open with animation. A curtain is drawn to reveal an empty, blue-lit cyclorama, foregrounded by the silhouettes of a conductor’s podium and some music stands. There are the fragmented sounds of the orchestra warming up – a flurry of clarinet notes, a recognisable bit of Tchaikovsky.

The players enter with a shuffle of footsteps as the Philadelphia Orchestra members take their places, and then the room fills with the sound of the players tuning to concert A. Classical music lovers know that progression of sound by heart, and it’s instantly spatial – the lone note of the oboe is followed by a sweeping flood of instruments across the spectral range of the orchestra, reverberating from left to right, across the ensemble’s sections and throughout the hall.

Or that’s how it sounds if you’re in a concert with live musicians, but not typically mid-century cinema. The standard means of distributing sound at the time was on a single, monophonic optical track, reproduced through one tinny-sounding centre speaker. The audience would see all the players of the orchestra, for instance, but the audio – with badly compressed dynamic and frequency ranges – would unrealistically originate from a single point behind the screen. Cinema had advanced the visuals to make them immersive – Disney’s own multiplane camera invention offered depth, a lush colour gamut was created via the Technicolor process, and the widescreen image format filled the audience’s view. What remained was sound.

Binaural to Fantasound

It was the same limitation that had frustrated engineer Alan Dower Blumlein almost a decade earlier, even with synched sound being a relatively new invention. The engineer famously went to the pictures with his wife Doreen and was maddened that the all-centre-channel sound didn’t match up with the position of the actors in the image. Blumlein was motivated enough to invent a series of technological innovations – 70 patents in all – that we now know as stereo. He proved the format by recording the London Philharmonic a couple of years later in what is now Abbey Road Studios. Then called »binaural sound« (not to be confused with what now carries that label), it was an entirely new means of recording, mixing, and reproducing sound, allowing recordings to encode and decode position information across a horizontal field.

But while Blumlein was inspired by cinema’s limitations, his work had still not reached movie theatres by 1940. And Disney wanted to go even further – not just providing some basic illusion of linear field, but creating immersive sound, impactful enough that you’d imagine the bee buzzing around you in »Flight of the Bumblebee« or hear floods of water from all sides in the climax of the Sorcerer’s Apprentice.

So in a handful of very expensive screenings, the Disney company went around their own distributor RKO and organised their own roadshow of Fantasia on custom hardware – a new, multichannel format they dubbed »Fantasound.«

»Sound enters a new era,« proclaimed RCA of their work on the project with Disney. Left, centre, and right soundtracks were printed together with a fourth »control« track manipulating gain, directly on 35mm sound film. (You had to then synchronise the sound film with another film containing the image.) To deliver to the audience, that left/right/centre configuration was distributed through a bunch of speakers – arrays of 12 left, 12 centre, and 12 right speakers, plus an additional 54 »house« speakers in the audience, or 90 speakers in all. Unlike modern surround, Fantasound had no independent rear channels, but audiences probably had the impression of rear audio from all the speakers emitting sound around and behind them.

The speakers were hidden behind the screen, but as visible in photos of the installation, the towering arrays look like nothing if not the 1940s cousin of Berghain’s Funktion One stacks. (That’s a relevant comparison – Berghain’s reputation is in part thanks to bathing patrons in visceral sound from all directions on their main club floor, even if the source remains stereophonic. It’s Fantasound for dancers.)

To prepare sounds for all those speakers, the Fantasound system incorporated entirely new recording techniques, too. Some came with new problems – Disney’s idea of recording sections with custom-constructed plywood wall baffles for separating sections initially made it so the orchestra players couldn’t hear one another and struggled to play in time. Disney also needed new equipment for mixing, new electronics and machinery for encoding and decoding the materials.

Studio tech boss William E. Garity invented the »panoramic potentiometer« as a means of directing where sound should go – the original »pan pot,« a left/right knob found on each channel strip on any studio mixer today. The system even included the equivalent of what we’d now think of as mixdown automation – there was custom dynamic control of different sections of the soundtrack and score, intended to shift focus and bandwidth to different source materials at the appropriate times in the film. Whereas computer software can now do this, the Disney engineers used a simple analogue control voltage to record and playback adjustments to volume.

The result was clear – immersion and simulated realism. As RCA put it, »the basses, woodwinds, strings, brasses, and other sections…are heard with all the dramatic beauty that normally reaches only the ears of the conductor…music is heard as if the orchestra were actually present!« The New York Times agreed, calling it »unprecedented effectiveness.«

In case anyone missed the spatial separation, it’s emphasised right in the first shots of the film. Conductor Leopold Stokowski turns first left to the violins, then right to the woodwinds, then centre to the double basses, then reaches out his arms as the full instrumentation booms into Bach’s bombastic Tocatta and Fugue. In a Fantasound-equipped theater, each gesture would have brought those enormous speaker stacks to life. Surround sound in any form would take 20 years to reach theatres. But the concept was the same that all sound-loving cinemagoers immediately wanted – realistic immersion that matches acoustic experience.

Live arenas as laboratories

That was 1940; let’s travel forward to the present. Immersive sound remains by its nature a collaboration between artist, sound engineer, audio technology, and venue – part hall, part laboratory.

As expected, deep-pocketed clients specialising in innovative spectacles are among the first to take risks on this sort of technology. Hollywood and Vegas are big consumers. So is Disney – Fantasound never caught on, but multichannel audio was used as the control scheme for the company’s »animatronic« robotic displays at Disneyland and Disney World, and Disney Parks continue to play with new immersive sound tech. Companies like Dolby have spent the past decades selling related tech for home consumption in various forms – first in matrix quadrophonic sound (1960s -70s), then variations on surround (1980s and 90s, especially with Dolby surround and DTS). Now, too, Apple is working with Dolby to push immersive consumer sound – building on a legacy of Dolby working for decades to convince home consumers to invest in sound that parallels their cinema experiences. You’ll get some simple version beamed into your headphones and decoded with Apple’s custom silicon, even in the company’s earbuds.

All of this might then seem like an expensive toy or consumer gimmick. But with the spread of research laboratories, computers, mobile technology, and readily available versions of this tech, spatial audio is no longer limited to corporate or consumer applications. Across the European landscape – and with an active hub in Berlin – artists and cultural workers are also working to proactively drive use of the same technologies, in ways that can be experimental, radical, personal, and performative.

The reason to keep Fantasound in mind is that it’s a decent example of a fully experimental, bespoke system – even by today’s standards – and as such, you can see some of the basic elements of spatial audio production for live events. You start by encoding some spatial information in the recording – in the case of Fantasound, beginning with live multichannel recording. You have a control mechanism – Fantasound used its pan pot and tone volume-control, »togad.« And then you’ve got a bunch of speakers arrayed through the space, plus some discrete audio information that you can route to those speakers that already contains some spatial information.

For all the competing systems and complexity, that basic formula remains today:

1. Encoded and decoded spatial information

2. A bunch of speakers around the space

3. A control system for production and live use

That will read as an oversimplification to more experienced spatial audio researchers, but the basic idea isn’t wrong. You want to use a bunch of speakers to immerse listeners in sound and have some precision about where sound is placed. The devil is then in the details.

The radical transformation now is the addition of digital storage and computation. In place of kilometres of optical and magnetic tape, you have digital files and code. In place of simple analogue controls like the pan pot and massive, room-filling »togad« apparatus for mix control, you have digital interfaces. And most importantly, the ability to compute how signals should be directed now allows sophisticated, precise algorithms that can direct sound reproduction and trick the ear into thinking sounds are exactly located in space.

This tech can be part of the sound system. It can run on your production computer. It can even in many forms run on your mobile phone.

From laboratory experiment to live instrument

Think about stereo for a moment. If you treat stereo sound as just two mono speakers instead of one, there isn’t much magic – or it really doesn’t even work. You could put some sound in the left speaker, some in the right. But stereo is only convincing when you stop thinking of the speakers or headphone cans altogether. The mix is most satisfying when it’s seamless, and you start to imagine that you’re hearing the sound in space – focusing less on the reproduction of a recording, and more on the sound as you’re perceiving it in the moment.

Spatial audio is understood to work in much the same way, but with many more speakers and positioning that goes beyond just a straight line. Even in good stereo mixing, there are ways of achieving illusions of depth and height. With the enabling technologies of immersive sound, that depth is geometrically expanded – literally speaking.

Thanks to more powerful computation – yes, even on your iPad or computer – plus networked standards for piping audio around for live events, the setup today is radically simpler than it was in the past.

There are competing formats for sound systems or PAs, including vendors like Meyer Sound, D&B, and L-ISA, plus more specialised offerings from the likes of Holoplot with speakers built for exact spatialisation. But a lot of the real creativity is on the side of control and sound – the actual manipulation of audio being increasingly in the hands of the artist and not only the engineer or researcher. Even for the purposes of researchers, what once was a slow, lab-based process is now increasingly real-time, experienced directly through perception.

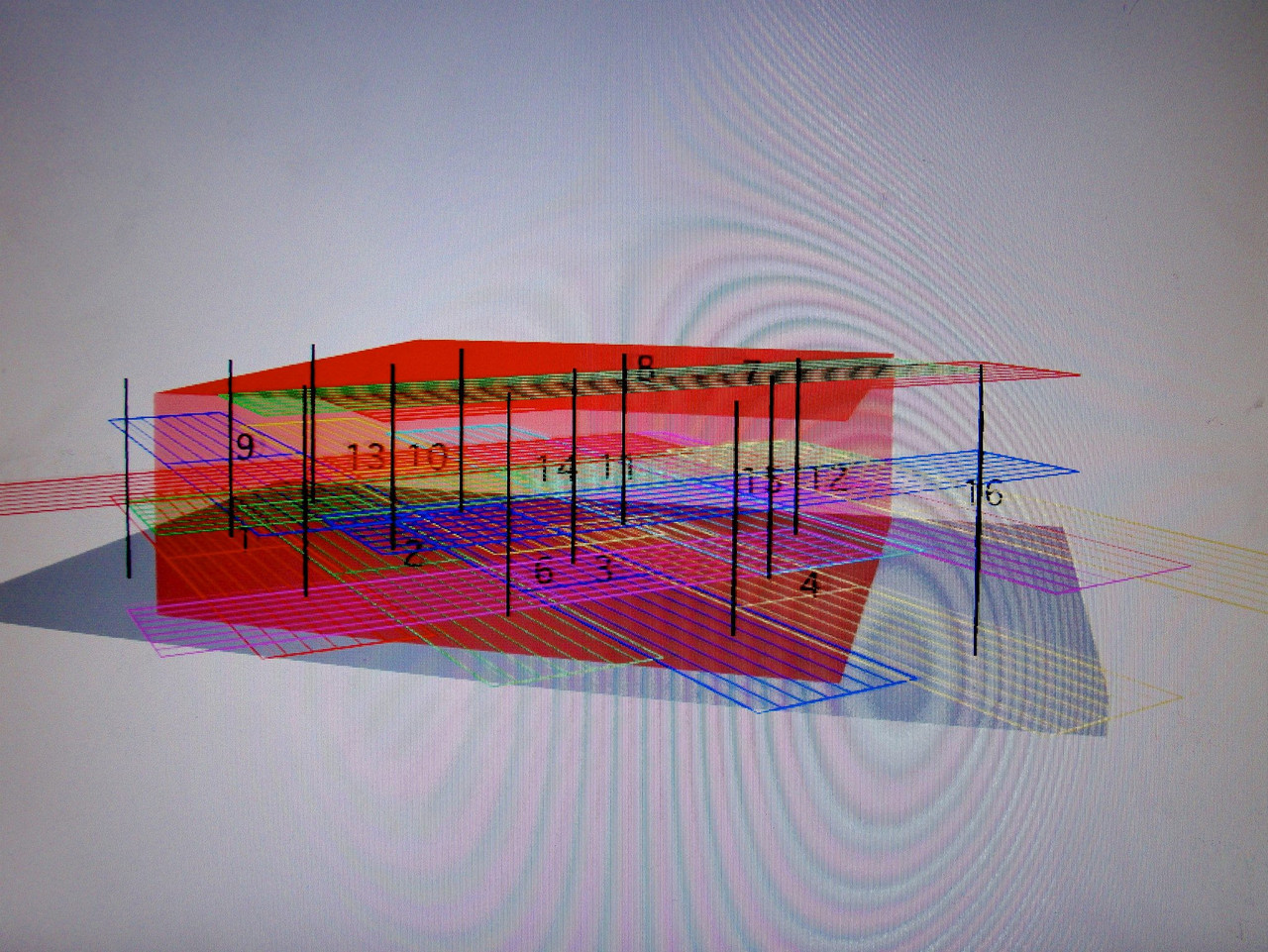

We can take Meyer Sound Galileo GALAXY as a good example, as deployed at this year’s CTM Festival. What Meyer Sound have done is add their own networkable processing – essentially rack-able boxes that do the hard work of computation for positioning sound across arrays of speakers.

An artist arriving with some sound materials can send analogue output or digital networked sound from a capable mixer or computer into the processing units. That processor does all the calculation of positioning and delay, and routes signal to the speakers.

Meyer Sound uses a popular format called AVB which works via Ethernet connection. (Dante is a frequently used competing format.) An end user can simply stream their audio to that connection from their computer, then control spatialisation using tools on the computer screen or an iPad remote app. Meyer Sound’s solution is particularly convenient in that it adds an app called Spacemap Go that provides easy realtime mixing – not just positioning sound as x, y, z coordinates, but »flying« sounds through complex dances in space called trajectories.

Other popular solutions involve tools like Forum IRCAM’s OSCar, which sends OpenSoundControl messages over the network.

The upshot of all of this is that the composer or audio artist can jam, choreograph, record, and recall animated movements of sound through space in sophisticated ways, on whatever system they may have available. They can also do that without being overly concerned about where the speakers are – focusing instead on the sound as it’s heard in the space.

A garden of new spatial sound arenas

Different spatial audio systems do have plenty of commonalities. That matters for production, as there are standardised ways of storing data about everything from the shape of your head and ears (for calibrating headphone functions) to spatial mix automation formats to how to connect to different systems and send control data.

But experimentation and invention can also push the envelope now just as they did in the last century. For artistic purposes, different systems mean different characters, visual settings, control capabilities, and physical architecture.

And these options are growing. In Berlin alone, you now have a selection of immersive-sound options that go beyond stereo:

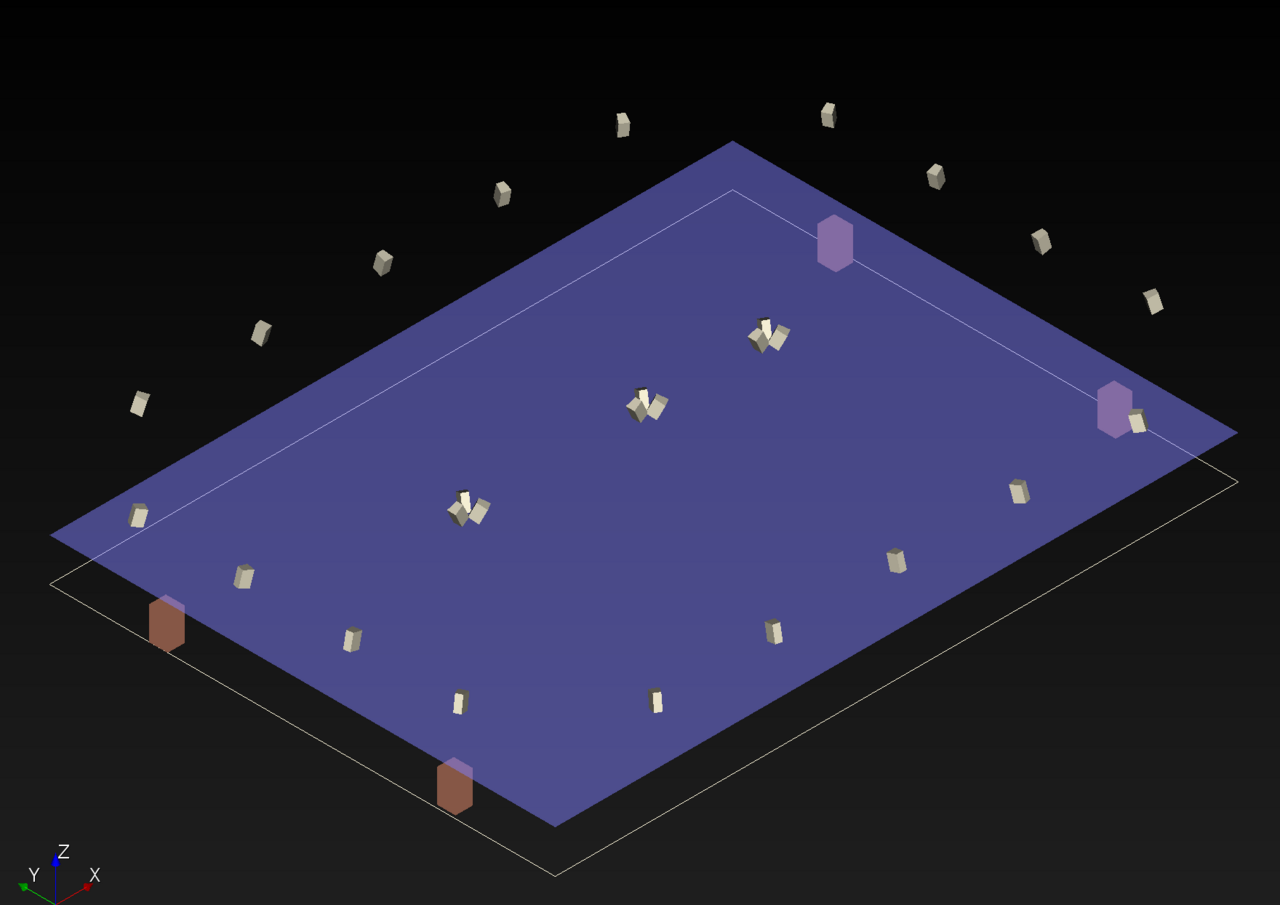

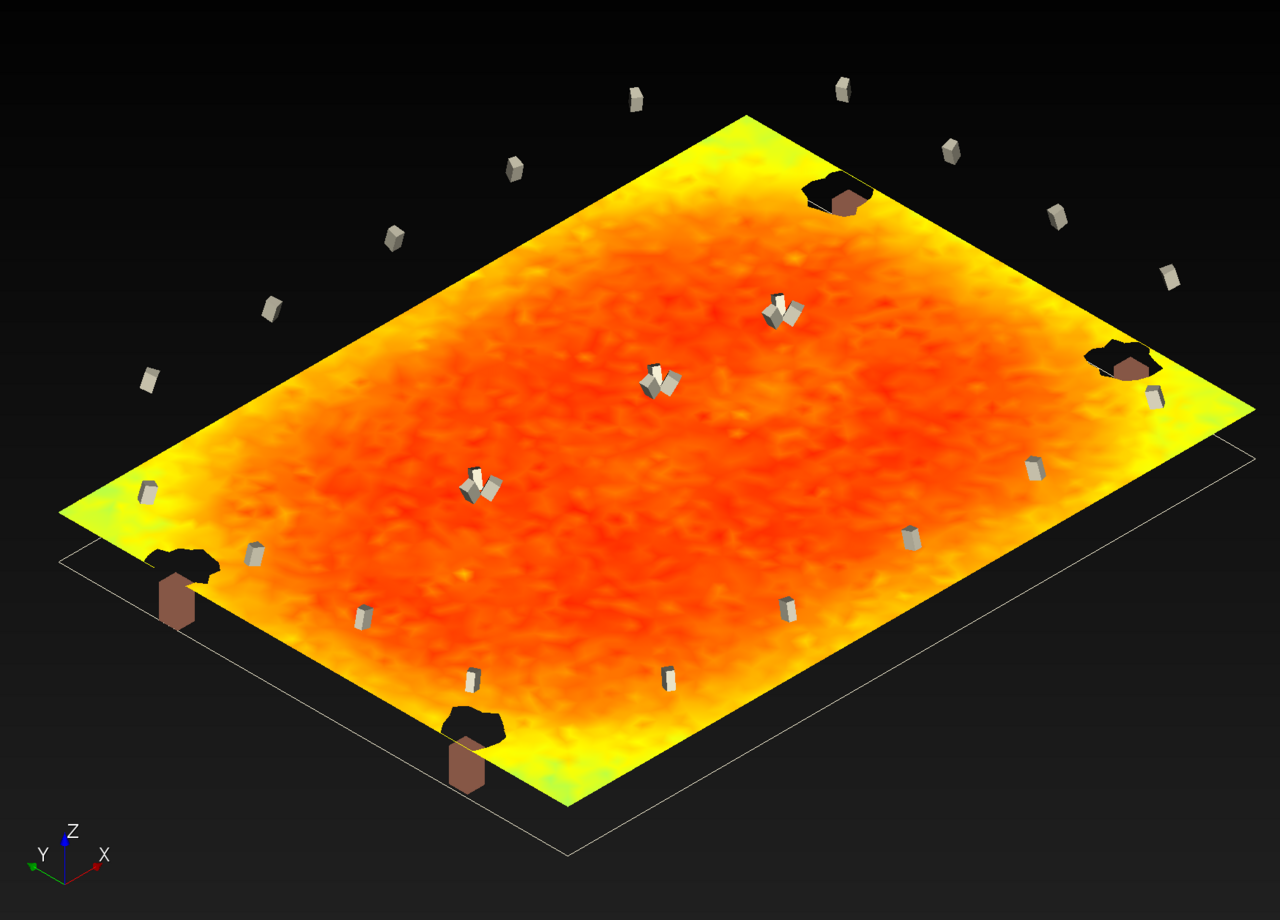

MONOM is a permanent venue for 4DSOUND technology, employing 9 subwoofers and 48 omni speakers, controlled with tools like Ableton Live and Max for Live. That has meant a regular programme of installations and performances in various configurations, and a consistent venue that allows ongoing spatialised programs. 4DSOUND have also developed artists’ abilities with programs like their Budapest-based research residency programme on the system. In 2014, we worked together to develop a 24-hour intensive laboratory for invited guests as part of the 4DSOUND programme at Amsterdam Dance Event, a spatial sound hacklab. (I also spent time working with Robert Lippok on a piece that year. We developed that work in an empty warehouse near Flughafen Tegel, with cavernous echoes – a reminder that these systems change characteristics drastically when you move them to different acoustic environments.)

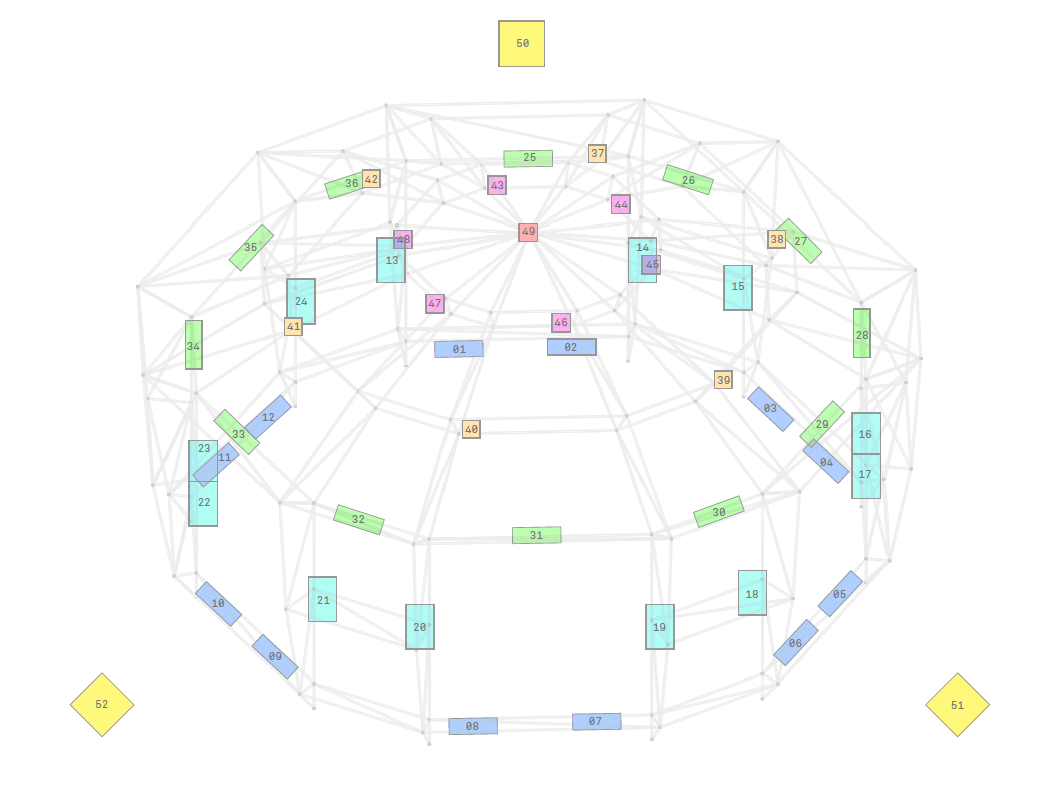

Stiftung Planetarium Berlinis routinely used for its 49 speakers and eight subs. The Spatial Media Lab has worked with the planetarium for an approach based on ambisonics – a way of standardising 3D positioning of sound as full-sphere surround, with portability across different speakers. The SML approach works with a free set of software, the IEM Plugin Suite from Institut für Elektronische Musik und Akustik at the Kunstuniversität Graz.

These tools can work live, too, not only with pre-recorded, choreographed materials as with cinema. So, for instance, when I played SML’s first event at the planetarium, I was able to work from SuperCollider live with direct positioning of instruments. I was also able to manipulate prerecorded stems via live mixing on an iPad by using a Lemur template. I even added a live mic input routed through the mixer and spatialised in real-time. Other works used frame-accurate synch (James Ginzburg) and pre-rendered ambisonic sounds (Music For Your Plants).

The result is sound positioned precisely through the dome, much as the planetarium’s own starfield can do visually (with lots of Zeiss lenses and whatnot). The architecture’s dome also mirrors the virtual dome mapped out by ambisonics – a departure from the left-right linearity of a proscenium theater and stereo audio space.

Dark Matter has a Holosound installation by Holoplot, which uses a unique sound »beaming« technology built atop a technique called Wave Field Synthesis. This tech uses positioning so precise that you can make a voiceover whisper in one audience member’s ear if you were to so choose, or move through architectural sound in space. Applied musically via their custom control software, you can move sound through delineated volumes in the space.

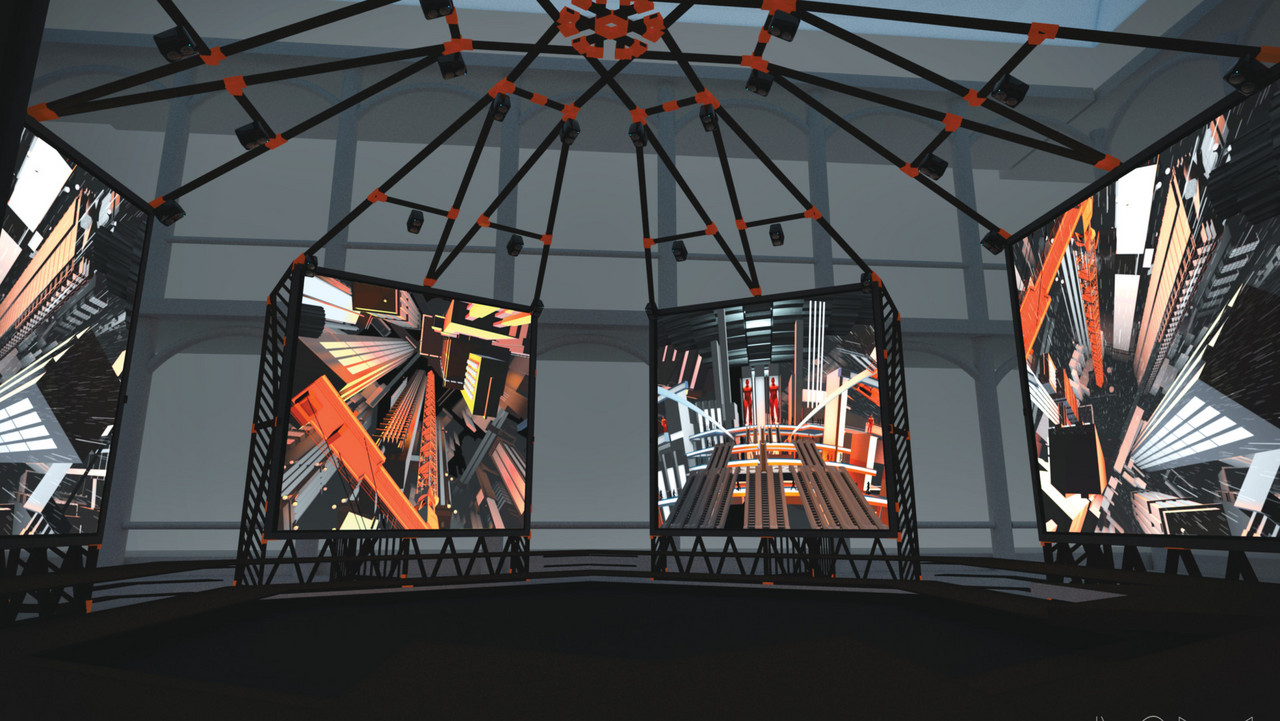

There have been temporary and moving installations, like the ISM Hexadome, which paired its six projection screens with a 52-channel surround sound system developed by ZKM Karlsruhe, in turn paired with Zirkonium software.

That’s to say nothing of Dolby Atmos studios – becoming more popular as Dolby pushes the format with Apple and other companies – plus other experimental Wave Field Synthesis setups, including a WFS lab at Technische Universität (TU) Berlin.

What all these spaces have in common is that they create a playing field for artists to experiment, learn, and develop. Nearly a century after Blumlein’s first Abbey Road orchestral recording, the novelty of stereo mixing hasn’t worn off. Mixing as science or as art form is not entirely exhausted; it still is open to new interpretations and developments. Stop by any music tech academy in town, and you’ll find students spending years honing their craft. Any time at the dead of night in Berlin, someone is staying up perfecting a mix – and that’s just stereo. Without access to quality stereo equipment – DAWs, plug-ins, mixers, microphones, headphones, studio monitors, and finally venues with left/right speaker configurations tuned for the space – stereo would be effectively untenable.

These projects also all benefit from published research – even as that research has now made it out of the lab and academy and into wider use. IRCAM offers a powerful standalone spatial mixer (Panoramix), and with private partner Flux Software, the comprehensive Spat Revolution suite of software tools. This can include not only functionality like mixing and panning in various formats, but also effects processing - since tools like reverb made primarily for stereo mixing don’t make sense in multichannel formats. The Institute of Electronic Music and Acoustics offers its free and open source IEM Plug-in Suite for ambisonics. ZKM | Hertz Lab has developed the Zirkonium spatial sound software suite, initially for its own ZKM KubusSound Dome, but used in other deployments, as well.

Gaming and virtual reality applications mean that the same approach to 360-degree sound may also become personal, delivered through VR headsets from Facebook/Oculus, Google, and gaming companies and game engines.

Removing barriers for artists

Acoustic spatial sound is fantastically accessible – our bodies naturally sense sound, through our bodies and inner ears, and even hard of hearing listeners can precisely pinpoint sound in 360 degrees with precision across their hearing range. (Deaf listeners with cochlear implants can even pinpoint sound as they move their head.)

Reproducing that sound is where things get tricky. There’s one big barrier that has always slowed spatial audio adoption, and it’s the human body. To immerse you in sound with speakers, you need more speakers – and more channels to drive them. And then, while you can standardise audio formats, you can’t standardise ear shape, which explains why everyone isn’t already mixing spatial audio perfectly through headphones.

Software, at least, is becoming less of a barrier. Tools like the inexpensive DAW Reaper include capabilities for lots of channels; free plug-ins like those from IEM and some of the open source offerings from IRCAM allow little or no investment. (Apple also promises it will add Dolby Atmos support to its own DAW, Logic.)

It’s tougher to solve the challenges of headphones and speakers, because physics – and the human head – don’t change. But there, too, there are some clever ways of reproducing sound without requiring access to something like an expensive Dolby Atmos-compliant sound studio. Artist Kamran V (aka CyKik), for instance, has been championing quad setups. His work on quad included releasing a quadrophonic album (on vinyl, no less) by Suzanne Ciani. His QUARK, or Quadraphonic Universally Accessible Resource Kit, is available free. The software suite developed by San Francisco’s Envelop and artist Christopher Willits is also free, and also will work with as few as four monitors.

Essentially, any producer who can find four matched speakers has the basics of setting up a spatial mix. Then it’s possible to upmix to more channels or more complex systems, either manually or using formats that automatically adapt to larger arrays.

Binaural encoders and decoders for headphones are also improving. The challenge there is that we hear inside our heads – and our heads and ears are not the same shape. (To get an indication of just how important the shape of your ears is, you can try squeezing your ears into a different shape and then try to listen to sound and tell where it’s coming from.) For exactly calibrated headphone listening, think ear molds and the minutes or even hours spent computing the right profile – at least for now, though, software and machine learning hold some promise for easier calibration in the future.

Head tracking technologies are also becoming increasingly accessible, including new offerings from Apple in their headphones and mobile devices and various gaming and DIY options. By tracking your head, you can also produce realistic sound positions – and we’re already accustomed to cocking our head slightly in order to better localise sound, so the interaction is a natural one.

It’s the usual story – production workflows for big industry game and film/TV applications do tend to cost more. Dolby does advise mixing on a fairly elaborate 7.1.4 speaker layout. But this is not unique to these latest spatial formats; previous surround setups for film also required more advanced rigs.

For all the attention given to big standard formats, it’s also a reasonable oversimplification to say that experimental, free, and open source tools often offer the most possibilities for artistic experimentation. There’s no way to avoid the laws of physics or the shape of your ears, but thanks to the accessibility of computers and the fact that a lot of research and software is shared publicly for free, the main prerequisite investment is not money, but time. And while workflows for media like cinema benefit from standardization, the most innovative experiments – as in the 1930s and 1940s – remain in experimental applications. The 21st century breakthrough is that the »lab« can be wherever you are, not confined to an elite institution.

The next fantasy

William E. Garrity and his RCA counterpart Watson Jones reflected in 1942 after the tour of Fantasia – perhaps a bit like Berlin ISM’s own tour. They conclude both with comments on the »outstanding success« of Fantasia as proof-of-concept – but also why the Fantasound approach had to be abandoned after the tour. Paraphrasing, Garrity and Jones concede that the custom installation required the use of too much equipment, time, and physical space, particularly in regards to cinemas’ packed booking schedules; that there was red tape on the road from different localities; and, not incidentally, that World War II happened.

But maybe the most telling line is this: »[w]e can only express our own opinions and the opinions of those who worked with this equipment; viz., having used the multiple-track system, no matter in what form, the ordinary sound-track reproduction is flat and dull by comparison.«

Even with all the wonders of modern computation, the feat of producing spatial sound has no Sorcerer’s Apprentice-style shortcuts. The fundamental task then as from the start remains finding ways of constructing convincing material, focusing on telling a story, creating architecture, choreographing movement, and tuning those results to the space. It’s a lot of work, even if research into perception and reproduction have made the results more precise and accessible.

But close your eyes and listen for a few moments. Your ears and mind already perceive incredible detail of sound location across the range of frequencies you can hear – slowed only by details like reflections. Immersive audio is not a novelty in hearing or a new special effect. It is a constellation of techniques and technologies that finally brings the electronic production of sound closer to the endless possibility of acoustic sound and human perception. After all that work, it brings the sonic fantasies in our head into that natural world.

Further Reading

Garrity, W. and Jones, W. (1942), »Experiences in road-showing Walt Disney’s Fantasia.« Journal of the Society of Motion Picture Engineers. Retrieved fromhttp://www.widescreenmuseum.com/sound/fantasound3.htm

Pollard, Justin (2019), »The eccentric engineer: How Mickey Mouse and ›Fantasound‹ revolutionised film music.« Engineering and Technology. Retrieved fromhttps://eandt.theiet.org/content/articles/2019/11/the-eccentric-engineer-how-mickey-mouse-and-fantasound-revolutionised-film-music/