This 3-hour workshop aims to give an introduction on machine learning and generative art techniques, as well as how to use datasets or any acquired data of your choice. We will explore and analyze a contemporary technique for generative music composition, which involves machine learning and the use of data as an external form of sound influence.

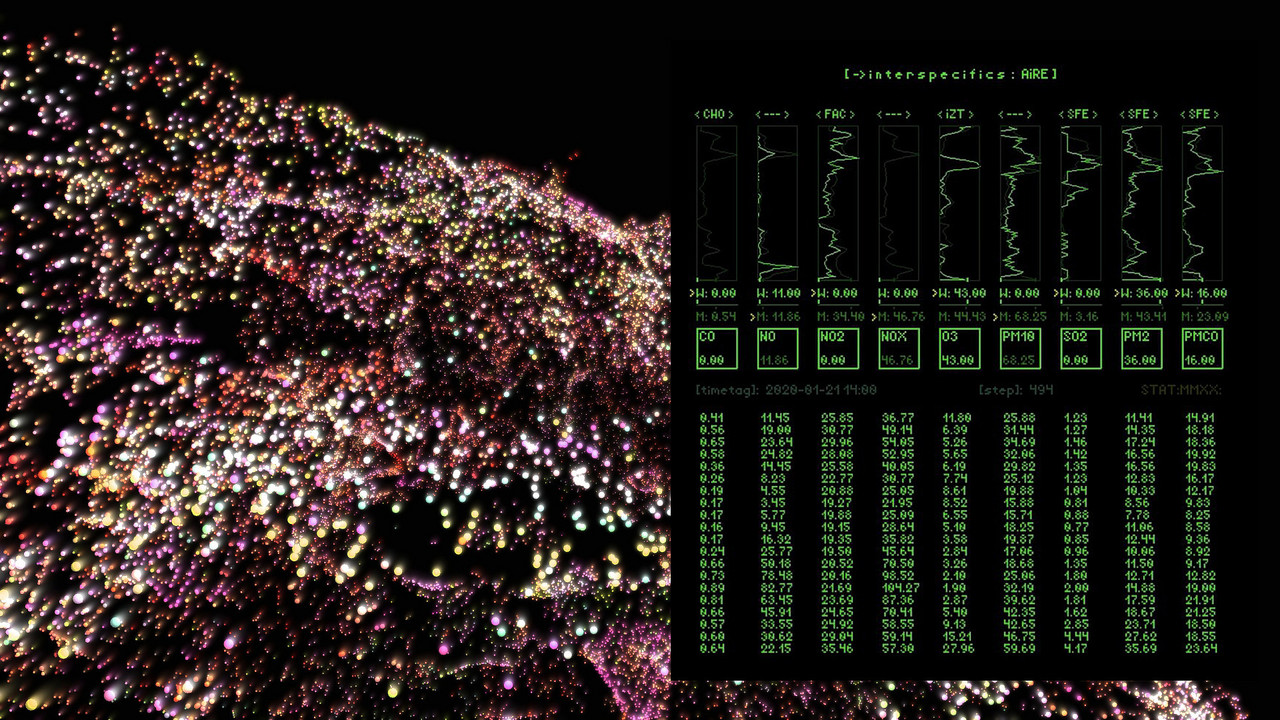

We will use an open source pattern-recognition application developed by our collective to map data streams as sound event triggers and modulators in Ableton Live via OSC. This data-driven strategy can be used in hybrid environments using both synthesis software and physical hardware. The use of data as an aesthetic element gives new shapes and possibilities to sonic and visual improvisation as opposed to classic sonification techniques.

Requirements

Participants should have experience with using Ableton, and prior experience with hardware synthesizers. Participants should have access to:

- a computer ( Mac OS, Windows) and headphones

- Ableton 10 suite (download trial version here)

- Osculator (download trial version here)

We will also be demonstrating how to control physical hardware with data signals. If you are interested in following this section hands-on, you will need:

- control voltage (cv) compatible synthesiser

- sound card with dc coupling

- cv tools package (download here)